{{customer-drata}}

AI is easy to experiment with but hard to get right in production. At small scales, it’s simple to test ideas—engineers can spin up models, tweak prompts, and deploy workflows manually. But as AI-powered systems grow, new challenges emerge:

Iteration slows down .

Every change risks breaking something else. Updating a single prompt can disrupt an entire workflow.

Observability is limited .

When an AI system gets something wrong, debugging can take hours. Without full visibility, errors remain unexplained.

Deployment gets risky.

Pushing updates requires engineering effort, making it hard to move fast without breaking things.

For companies using AI in high-stakes environments, these challenges aren’t just frustrating—they’re blockers to growth. Drata, the leading trust management platform, ran into this problem firsthand. Their AI-powered security questionnaire system needed to handle thousands of unique customer environments, each with strict data separation and evolving compliance rules.

Drata needed to scale their AI workflows while ensuring accuracy, security, and reliability. That’s where Vellum came in.

We sat down with Kevin, an AI engineer at Drata, to dive into their journey—from the challenges of building V1 to leveraging Vellum to create a powerful, enterprise-grade AI solution.

Isolated vector databases per customer

Compliance is a data-sensitive industry. Drata manages 7 ,000+ customers, and each one requires strict data separation. A one-size-fits-all AI model wouldn’t work—every customer needed their own isolated knowledge base to ensure privacy and security.

“In our system, each customer has their own database. That means 7,000+ databases, physically separated, and in some cases, multiple per client. Keeping this structured while running AI at scale was a huge challenge,” Kevin explained.

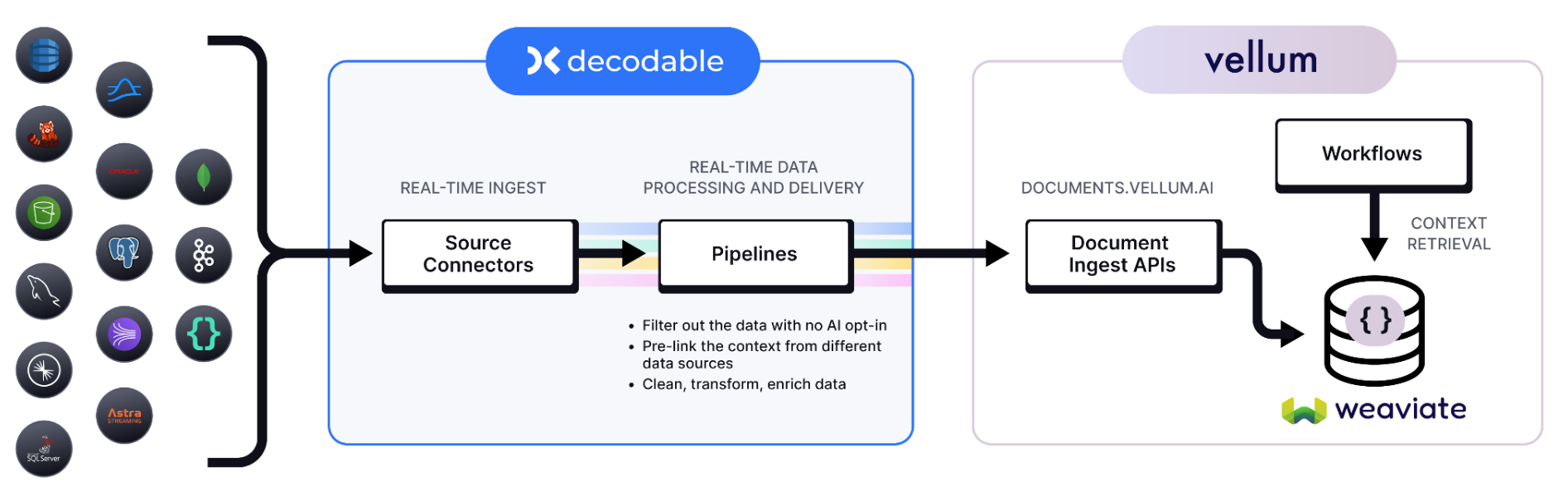

To solve for that, each of their customer’s data is stored in its own isolated vector database thru Vellum’s out of the box RAG component. Drata now manages over 28,000 separate vector databases. Vellum ensures this strict separation while maintaining high-performance retrieval.

“When tenant A makes a change to their database, it’s only reflected in their own document index. This is crucial for questionnaire automation,” Kevin adds.

Engineering and product work closely together

Drata’s AI engineers wanted to shift the bulk of their time from infrastructure, hosting, and debugging to building and refining AI workflows. But without the right tools, maintaining AI pipelines meant countless hours of manual coding and troubleshooting.

“Our early AI automation was built in pure Python. Every tweak, every update, every bug fix—it all required engineering time,” he shared.

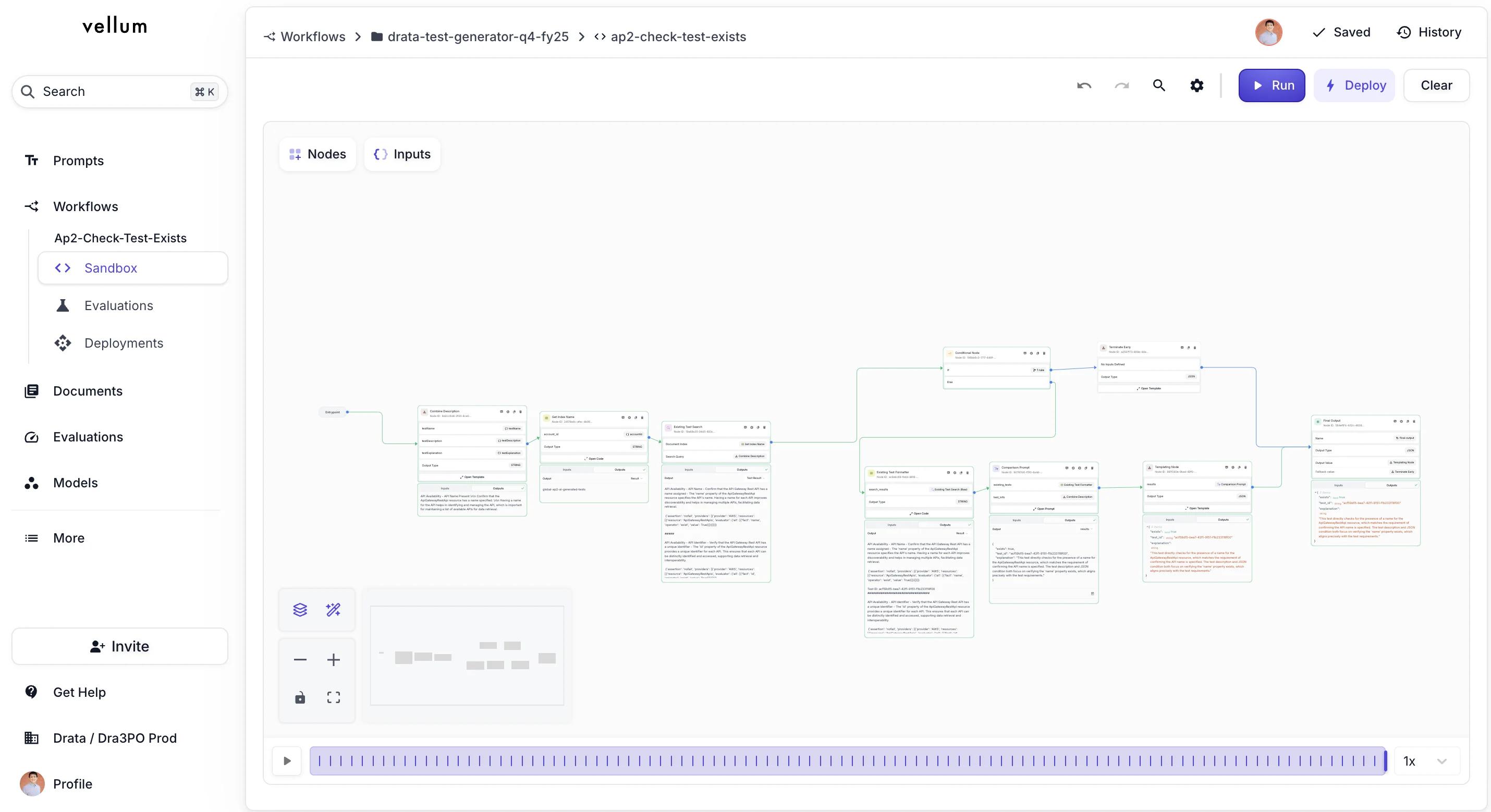

Now, Drata’s product managers—who understand compliance best—can build and refine AI workflows without needing engineers to write code using Vellum Workflows . After the initial workflow is set up, engineers can take it a step further using the Vellum SDK and integrate with internal systems, or add custom logic as needed.

This balance lets Drata’s team move fast—product managers can iterate on workflows independently, while engineers focus on deeper optimizations and scaling.

Proactively improving AI accuracy

AI workflows must be accurate, reliable, and compliant—but ensuring that at scale is a challenge. Without the right safeguards, small changes can introduce unintended errors, regressions, or compliance risks.

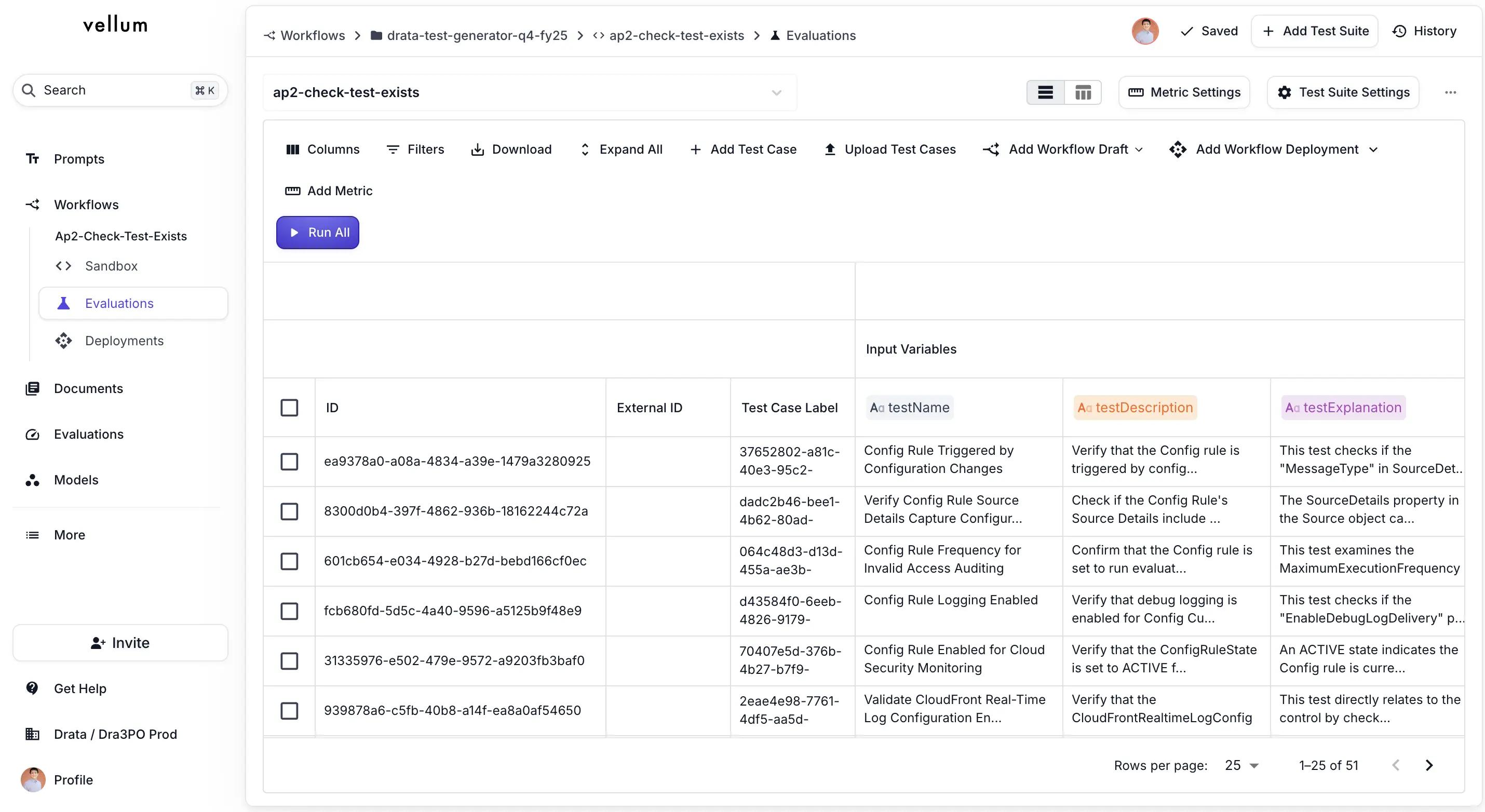

Drata solves this by integrating Vellum’s evaluation framework into their AI development lifecycle, creating a system that prevents issues before they impact customers.

1/ Rigorous Testing Before Deployment

Before any AI update goes live, it is tested against a suite of 100+ real-world security questionnaire questions. This ensures that every response aligns with industry compliance standards, customer expectations, and previous correct answers.

“Security questionnaires vary across customers, so we need AI that adapts while maintaining precision. Every update goes through our test suite before it reaches production.”

2/ Capturing Regressions Early

Compliance workflows evolve constantly. Customers update policies, security requirements shift, and AI models need frequent updates to stay accurate. But with a slow, manual process, Drata’s AI team couldn’t iterate fast enough without risking performance regressions.

Now, with Vellum Evals, if a new version introduces inconsistencies, the system flags the issue before deployment, preventing inaccurate or incomplete answers from reaching customers.

“If a customer flags an incorrect AI-generated response, we add that case to our evaluation suite, refine the workflow, and ensure it never happens again,” Kevin shared.

3/ Proactive AI Monitoring with Vellum SDK

Drata doesn’t just react to issues—they proactively monitor AI performance with nightly evaluation jobs powered by the Vellum SDK.

"We run evaluations every night through the Vellum SDK. If an AI response starts drifting in quality, we catch it before it affects customers."

Instant iteration and full observability

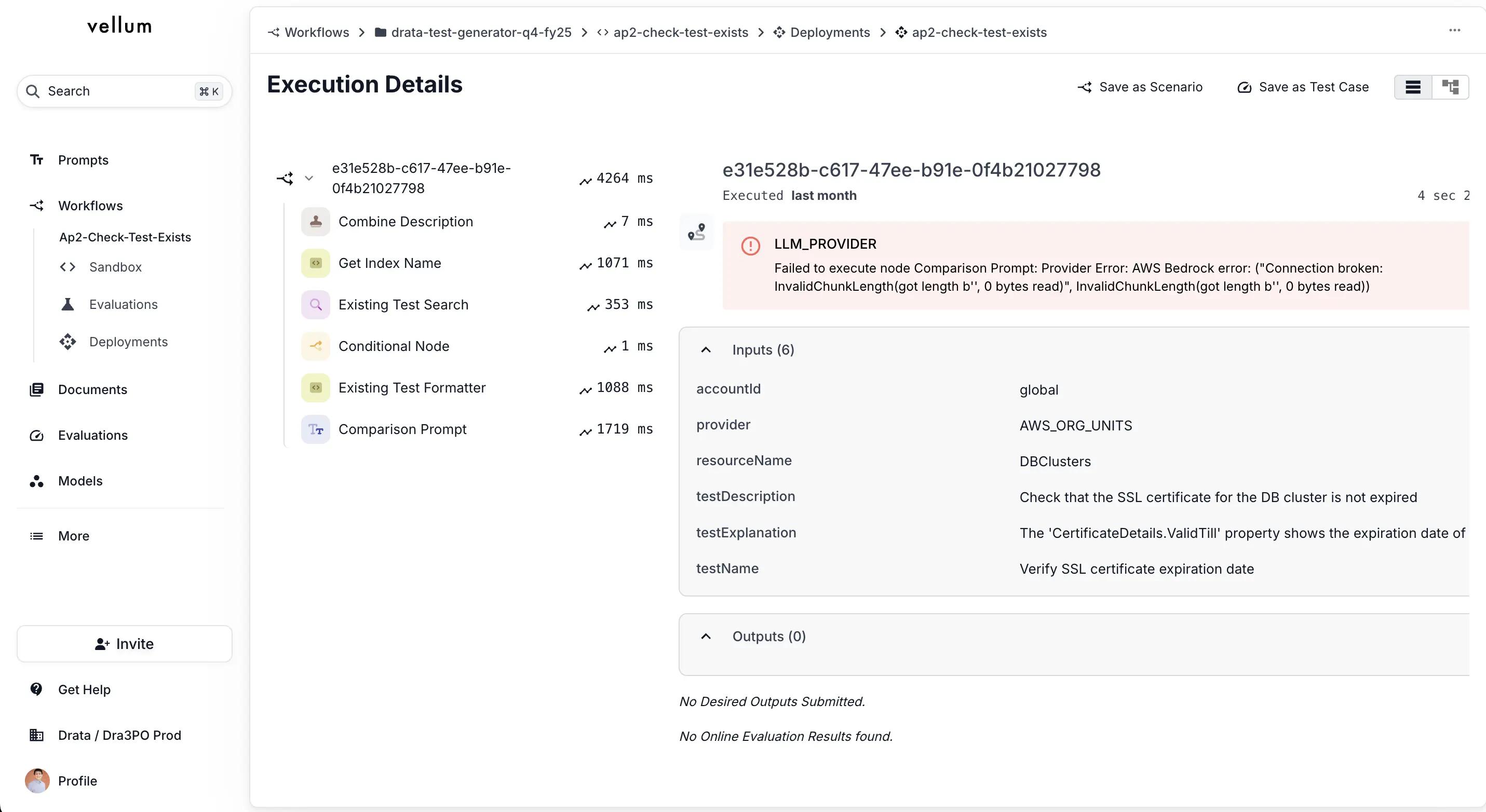

Today, Drata leverages Vellum’s Observability Tools to decouple deployments from their app deployment. With one-click deployment, Drata can push fixes immediately while ensuring they don’t break existing workflows.

On top of that, they monitor AI workflows in production with granular traceability and real-time debugging. Every AI workflow execution is logged in Vellum, allowing engineers to trace the exact inputs, outputs, and decision paths taken at each step.

If an AI-generated response is incorrect, they can replay the execution to see where it went wrong—whether it was a prompt, retrieval issue, or reasoning failure.

"If a customer reports an issue, we trace the execution, fix the workflow, and ensure it never happens again—all through Vellum."

The Impact: Enterprise-Grade Compliance Automation

With Vellum, Drata achieved enterprise-grade AI automation without sacrificing security, accuracy, or speed:

1/ Security questionnaires automated in 60 days – even with strict compliance requirements.

2/ Engineering autonomy – AI teams can iterate independently without waiting on application developers.

3/ Faster, safer AI updates – One-click deployment ensures instant improvements without breaking existing workflows.

4/ High observability – Every AI execution is logged, traced, and easily debugged, providing full visibility into decision-making.

“It’s easy to get an AI application running these days,” Drata’s lead AI engineer said. “But getting it to an enterprise-grade level—with privacy, monitoring, and evaluation—is hard. Vellum makes it possible. ”

Build AI Enterprise-Grade Solutions With Velum

Drata turned a manual, time-consuming compliance burden into a secure, scalable, and fully automated AI workflow—all while maintaining accuracy and control.

If your team wants to deploy AI faster, iterate with confidence, and eliminate engineering bottlenecks, Vellum can help.

Request a demo today to connect with an AI expert and equip your engineering and product teams with the tools they deserve.